Building stuff on larger, more capable machines, that will eventually run in smaller more constrained environments

Statement of intent

As a hobbyist and tinkerer I would like to be able to assemble containers which, at the press of a button, can be run on a multitude of different target platforms.

Reifying the intent

My build box is a Windows 10 laptop running Docker desktop version 19.03.8. Docker version 19.03 is a significant release, in particular, this release includes buildx, an experimental feature. If you google for: “docker buildx arm”, you’ll learn that about a year ago Docker and Arm announced a business relationship, whereby Docker the company would provide a new capability using the BuildKit engine, for creating cross platform images that would run on Arm and other Linux machines.

How convenient is that?!

In this post we’ll be using the experimental buildx option, through the docker cli, to leverage BuildKit to create a container image which will deploy to and run in a Raspberry Pi4 Kubernetes cluster. There’s a lot of behind the scenes details which you’ll probably want to know, which is why I mentioned the earlier google search terms. In the space below we will focus on getting it done, rather than understanding how does it work. How it works has been described numerous times already.’

To get us started we’ll be using a project that we worked with earlier in a Simple micro service in Go. If you haven’t done so already go ahead and download it into your Go source folder. There’s some minor changes that i’ve added to the project, there’s now another file called Dockerfile-linux which I use locally to build and deploy to my Docker Hub account.

New multi architecture Dockerfile-linux

FROM golang

ARG TARGETPLATFORM

ARG BUILDPLATFORM

# Add some extra debug to the output

RUN echo "Building on $BUILDPLATFORM, for $TARGETPLATFORM"

ADD . /go/src/github.com/mjd/basic-svc

# Build our app for Linux - CGO_ENABLED=0 GOOS=linux GOARCH=arm GOARM=7 arm32v7

RUN go install github.com/mjd/basic-svc && rm -rf /go/src

# Run the basic-svc command by default when the container starts.

ENTRYPOINT /go/bin/basic-svc

# Document that the service listens on port 8083

EXPOSE 8083TARGETPLATFORM and BUILDPLATFORM are referenced during the build to aid with debugging. With Docker desktop v19.03 installed, you should enable the experimental feature, restart the Docker Engine and verify that buildx is working.

# verify buildx by lsiting the default builder

$ docker buildx ls

NAME/NODE DRIVER/ENDPOINT STATUS PLATFORMS

default docker

default default running linux/amd64, linux/arm64, linux/ppc64le,

linux/s390x, linux/386, linux/arm/v7, linux/arm/v6The legacy default builder won’t be able to create images for the platforms we’re interested in, so instead we’ll create a new builder which will.

# Create a new builder, capable of building images for our targets

$ docker buildx create --name nix-arm

nix-arm

# list the builders again and we see newly created nix-arm

docker buildx ls

NAME/NODE DRIVER/ENDPOINT STATUS PLATFORMS

nix-arm docker-container

nix-arm0 npipe:////./pipe/docker_engine

running linux/amd64, linux/arm64, linux/ppc64le,

linux/s390x, linux/386, linux/arm/v7, linux/arm/v6

default * docker

default default

running linux/amd64, linux/arm64, linux/ppc64le,

linux/s390x, linux/386, linux/arm/v7, linux/arm/v6To make use of a specific builder, we use it. In the code below we use our new builder and also run the inspect command on it.

# Let docker cli know which builder to use

$ docker buildx use nix-arm

$ docker buildx inspect

Name: nix-arm

Driver: docker-container

Nodes:

Name: nix-arm0

Endpoint: npipe:////./pipe/docker_engine

Status: running

Platforms: linux/amd64, linux/arm64, linux/ppc64le,

linux/s390x, linux/386, linux/arm/v7, linux/arm/v6With our builder created and set we can now run the build, which will push images for each target platform to Docker Hub. Be sure to change my Docker Hub location (-t mitchd/basic-svc-linux) to yours.

# create images for multiple platforms and push images to Docker Hub

$ docker buildx build --platform linux/amd64,linux/arm64,linux/arm/v7 \

--push -t mitchd/basic-svc-linux -f Dockerfile-linux .

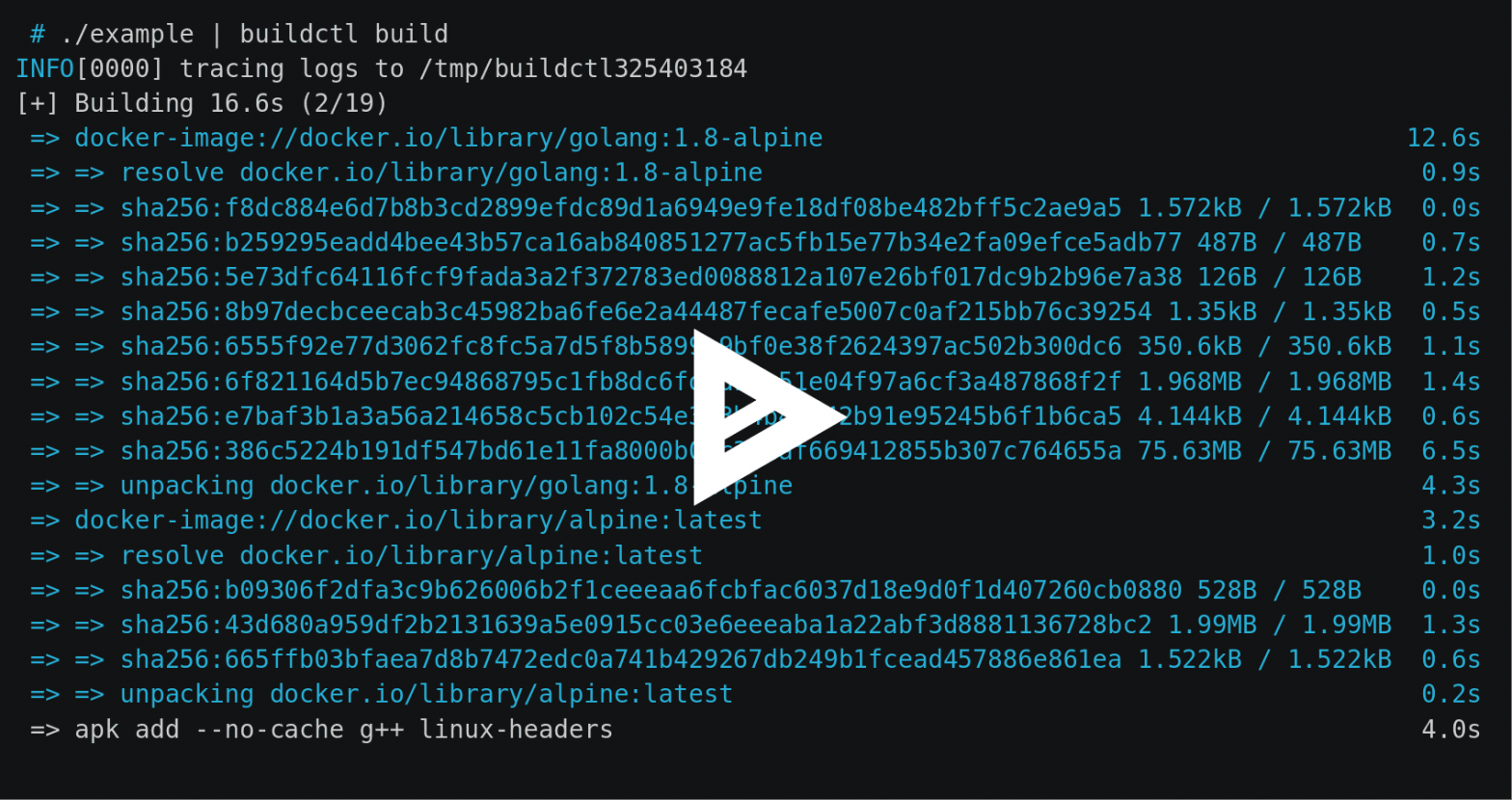

# lots of image pulls and intermediate results

...

1.1s

=> [linux/arm64 3/4] ADD . /go/src/github.com/mjd/basic-svc 0.0s

=> [linux/arm64 4/4] RUN go install github.com/mjd/basic-svc && rm -rf /go/src 6.1s

=> exporting to image 11.3s

=> => exporting layers 4.1s

=> => exporting manifest sha256:d22bb66f31f9bf57f1252f5b770219808c428638ba823e12d481f55a2e600120 0.0s

=> => exporting config sha256:ce463347999a38dcc25295bd455de4aaf19290dd987f2c77cb6d3a1a02b77826 0.0s

=> => exporting manifest sha256:c8b84bbefccb992743a3dde2a453c483941b1b16c45fdf2b5a062382f83dcc3a 0.0s

=> => exporting config sha256:ea56a06d8afc774db80207608c55dd1de5d09de3c7e0194fd32bf2af8a1c5788 0.0s

=> => exporting manifest sha256:e7ef6fc6118ffd0163cff3e40e53228ca13142f434e02d3bb143835d70e82074 0.0s

=> => exporting config sha256:f469c4777fe897ffdd386922110efa84d13a8d2e3b58bf5f1f0b376ce69547d7 0.0s

=> => exporting manifest list sha256:d02d8d6ee6a6cbce49653490a7b16f38468d5647f0f26bd9ebe94d28267c6e4b 0.0s

=> => pushing layers 5.7s

=> => pushing manifest for docker.io/mitchd/basic-svc-linux:latest

Verify that the images were created for the correct target using imagetools inspect.

# create image for generic linux

docker buildx imagetools inspect mitchd/basic-svc-linux:latest

Name: docker.io/mitchd/basic-svc-linux:latest

MediaType: application/vnd.docker.distribution.manifest.list.v2+json

Digest: sha256:d02d8d6ee6a6cbce49653490a7b16f38468d5647f0f26bd9ebe94d28267c6e4b

Manifests:

Name: docker.io/mitchd/basic-svc-linux:latest@sha256:d22bb66f31f9bf57f1252f5b770219808c428638ba823e12d481f55a2e600120

MediaType: application/vnd.docker.distribution.manifest.v2+json

Platform: linux/amd64

Name: docker.io/mitchd/basic-svc-linux:latest@sha256:c8b84bbefccb992743a3dde2a453c483941b1b16c45fdf2b5a062382f83dcc3a

MediaType: application/vnd.docker.distribution.manifest.v2+json

Platform: linux/arm64

Name: docker.io/mitchd/basic-svc-linux:latest@sha256:e7ef6fc6118ffd0163cff3e40e53228ca13142f434e02d3bb143835d70e82074

MediaType: application/vnd.docker.distribution.manifest.v2+json

Platform: linux/arm/v7

With our Raspberry Pi 4 image created and deployed into Docker Hub, lets see if we can push it out to the Kubernetes cluster we created in my post: moving Kubernetes closer to the bare metal. As we continue in this example we’re going create a pod, service and gateway using our earlier Rancher K3S Kubernetes cluster from the link to the post above.

Copy to: deploy-basic-svc.yaml – to create our service, gateway and pod

apiVersion: apps/v1

kind: Deployment

metadata:

name: basic-svc

labels:

app: basic-svc

spec:

replicas: 1

selector:

matchLabels:

app: basic-svc

template:

metadata:

labels:

app: basic-svc

spec:

containers:

- name: basic-svc-armv7

image: mitchd/basic-svc-linux

ports:

- containerPort: 8083

---

apiVersion: v1

kind: Service

metadata:

name: demo-service

spec:

selector:

app: basic-svc

ports:

- protocol: TCP

port: 80

targetPort: 8083

---

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: basic-svc-ingress

annotations:

kubernetes.io/ingress.class: "traefik"

spec:

rules:

- http:

paths:

- path: /

backend:

serviceName: demo-service

servicePort: 80In the snippet below we’ll set a watch using a shell on the K3S server to verify our components are created.

$ sudo watch kubectl get allIn another shell we’ll run the yaml file we created above to deploy our pod and make it accessible to the outside. It may take a few minutes to download the basic-svc from Docker Hub and deploy it to a container in our K3S cluster, so be patient.

# Apply the basic-svc yaml

$ sudo kubectl apply -f deploy-basic-svc.yamlIn the shell running the watch command, you should see the gateway, service and pod being deployed into the K3S cluster.

Every 2.0s: kubectl get all viper: Sun Apr 12 19:22:34 2020

NAME READY STATUS RESTARTS AGE

pod/basic-svc-7bc858fb89-59sfg 1/1 Running 0 3h33m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.43.0.1 <none> 443/TCP 9d

service/demo-service ClusterIP 10.43.143.187 <none> 80/TCP 3h33m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/basic-svc 1/1 1 1 3h33m

NAME DESIRED CURRENT READY AGE

replicaset.apps/basic-svc-7bc858fb89 1 1 1 3h33mTesting our pod

# using httpie connect to the IP address for your service, mine is 10.43.143.187

# using curl: curl http://10.43.143.187/health

$ http 10.43.143.187/health

HTTP/1.1 200 OK

Content-Length: 65

Content-Type: application/json

Date: Sun, 12 Apr 2020 23:31:58 GMT

{

"Hostname": "basic-svc-7bc858fb89-59sfg",

"IpAddress": "10.42.2.9"

}Cleanup

# To remove our demo code

$ sudo kubectl delete -f deploy-basic-svc.yamlIn my basic-svc git repository i’ve added a Helm Chart which deploys the basic service as we just did above. Be sure to do a pull request if you have an older repository downloaded.

You might recall that one of the last steps we performed when we installed k3s in moving Kubernetes closer to bare metal, was to install Helm. In that process we didn’t exercise Helm so i’ve included some basic getting started steps below.

Using Helm to Deploy basic-svc

# pass k3s cluster config to helm

$ sudo helm install demo-svc . --kubeconfig /etc/rancher/k3s/k3s.yaml

# send a json request to the service as we did before

$ http 10.43.166.150/health

HTTP/1.1 200 OK

Content-Length: 66

Content-Type: application/json

Date: Mon, 13 Apr 2020 11:27:29 GMT

{

"Hostname": "basic-svc-7bc858fb89-mkkqp",

"IpAddress": "10.42.2.10"

}

# list

$ sudo helm list --kubeconfig /etc/rancher/k3s/k3s.yaml

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

demo-svc default 1 2020-04-13 07:26:38.817... basic-svc-0.0.1 1

# cleanup

$ sudo helm uninstall demo-svc --kubeconfig /etc/rancher/k3s/k3s.yamlWe’ve completed a lot. We now have a methodology for docker which can create images for Linux amd64, Amazon EC2 A1 64-bit Arm, Raspberry Pis running armv7 and potentially more. Then we created some yaml and a Helm chart to push our docker container into our K3S cluster. Lastly we interacted with our service pod.

References

Building Multi-Arch Images for Arm and x86 with Docker Desktop